What’s the primary goal of every business?

The answer is simple: bringing results.

And as you have landed here, you are probably wondering:

- Can I do anything to increase my conversion rate?

- How to turn usual visitors into paying customers?

That’s exactly what we will discuss today.

We will show that A/B testing can be a game changer for your website. Depending on your goals, properly executed A/B tests can result in higher click-through rate (CTR), increased conversions, and, finally, bigger sales.

Interested? Let’s have a look:

- What is A/B testing?

- What are the benefits of A/B testing?

- How to perform an A/B test? (based on authentic examples!)

What is A/B Testing?

A/B testing (or split testing) is a method of comparing two versions of a website, its specific element, or an app to check which one performs better. It can be called an experiment where two (or more) page variants are shown to users at random. Then, based on statistical analysis, you’ll understand which version performs better for a given conversion goal.

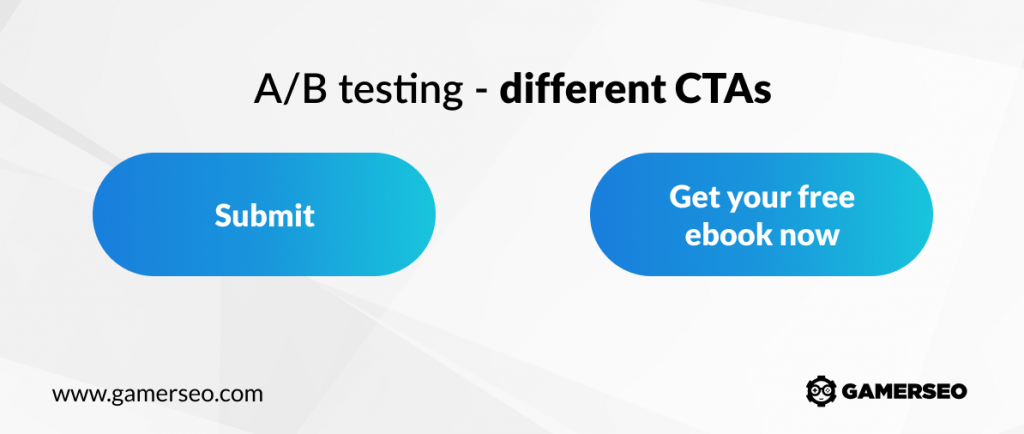

Img alt: A/B test of call to action aimed at first time visitors aiming at conversion rate optimization

Let’s say that your goal is to optimize the lead generation of your online store. Yet, you are not sure which type of CTA copy to use on your landing page. Instead of making this decision based on your personal preference, you could use A/B testing to see if either option was a better incentive to your target audience and, therefore, resulted in more conversions.

First, you’d create one variant with, say, “submit”, while the other CTA button would be “get your free ebook now.” As a next step, you’d run a straightforward test, breaking up your traffic evenly between them.

At the end of the test, you’d examine the results to see if the lead generation increased for one of the variants. If so, you could implement it permanently for all site visitors to increase leads even further.

Obviously, this is a simplified test version, and there are many ways to alter the A/B testing process to match your goals. The same can be done to compare two different product pages, sign up form submissions, landing pages, or anything else.

Does A/B Testing Really Work?

At this point, people usually wonder: Can such a slight change really make a difference?

Most importantly, A/B test leaves the guesswork out. It allows you to shift from “we think” to “we know.” Based on data-driven decisions while measuring the impact of changes on your metrics, you can ensure whether every change produces positive results or not.

With A/B testing strategy, you might solve a lot of issues that inhibit the growth of your business. For instance, it enables you to diagnose the reason for cart abandonment. You can also monitor the usefulness of website elements such as customer testimonials or check if your drop down menu might be anyhow improved.

A/B testing is by no means a walk in the park. Although it may seem fairly simple to learn and execute, it is difficult to pull off if you don’t have the right tools and the correct data. This is why a lot of companies couldn’t get the results they want and end up frustrated with their testing efforts.

How to perform an A/B test?

Research – gather data and the most important information on everything related to the number of visitors, pages with most traffic, the various conversion goals of different pages, and other elements. In other words, if an old blog post hardly gets any readers anymore, you don’t want to waste your energy.

Observe and formulate hypotheses – as you collect the necessary data, your goal is to formulate data-driven hypotheses that you will later test.

Create variations – create a variation according to your hypothesis, and A/B test it against the existing version. A variation is another version of your original version with changes that you aim at testing. Additionally, you might create more than one variation (multivariate testing) against the original to determine the top performing one. For example, many people abandon shopping carts? Maybe you can get great results with visible social proof or highlighted information about free shipping. Also, decide if the mobile website version also takes part in the test.

Run test – startthe test and wait for the predefined time for achieving significant statistics.. Remember that no matter which method you choose, your testing method and statistical accuracy will determine the end results. Decide if you want to make it a long process. Keep in mind your average daily and monthly visitors, estimated existing conversion rate, minimum improvement in conversion rate you expect, number of variations (including control), percentage of visitors included in the test, and so on.

Analyze results and deploy changes – Once your test concludes, analyze the test results by considering metrics like percentage increase, confidence level, direct and indirect impact on other metrics, etc.

Example of A/B Test Case Study by GamerSEO

One of our clients is a website that specializes in trading virtual goods from MMORPG games. They have reached out to us to see if we can help them achieve a higher conversion rate. After an analysis of the website’s original design, we have decided to perform two A/B tests for two separate subpages.

Hypothesis

Before starting the tests, the following hypotheses were made:

- Simplifying the design will increase conversions.

- Changing the arrangement of steps on the page and simplifying the design will increase conversions.

Launch Conditions and Goals

The tests lasted 3 months and were set up with two goals. The first and primary goal was to increase conversion rates, i.e. to place an order. The second goal was to increase the number of people signing up (creating an account).

In both cases, the test group consisted of 100% of users using the Chrome browser on the desktop. Mobile devices were excluded from the tests. The users were presented with two variants – Original and Variant 1.

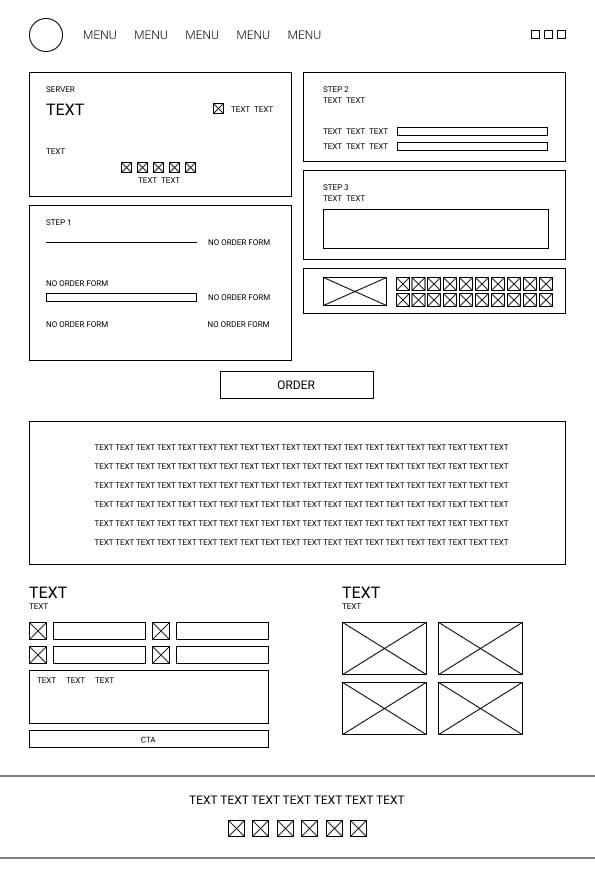

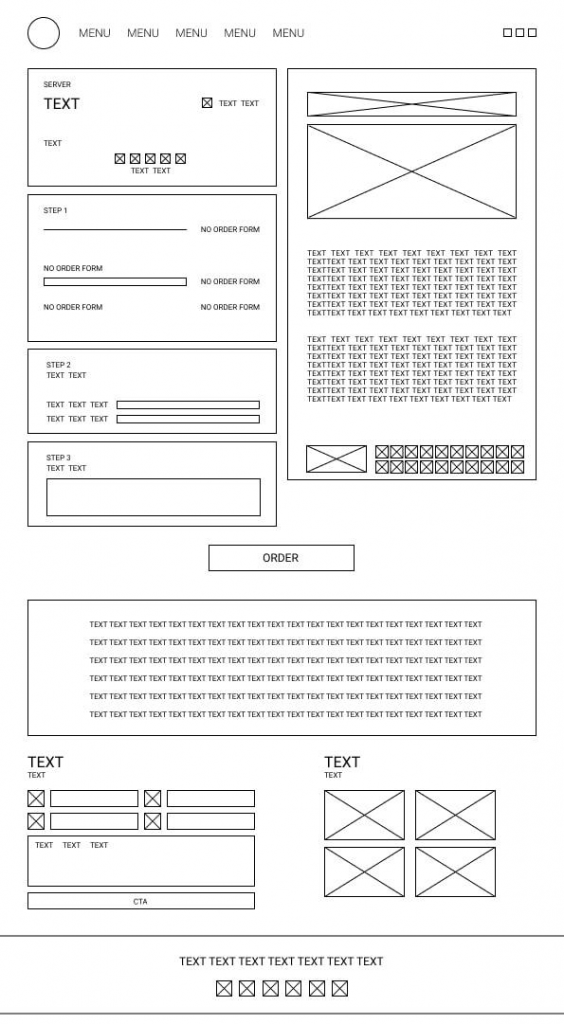

Original Variant

New Variant

First A/B Test: Results

The main goal – placing an order:

Original – Probability to be best – 17%

Variant 1 – Probability to be best – 83%

The ‘Probability to Be Best’, indicates each variation’s long-term probability to out-perform all other live variations, given collected data since the creation or change of any variation included in the test.

Secondary goal – creating an account

Original – Probability to be best – 75%

Variant 1 – Probability to be best – 25%

Second A/B Test: Results

The main goal – placing an order:

Original – Probability to be best – 26%

Variant 1 – Probability to be best – 74%

Secondary goal – creating an account

Original – Probability to be best – 9%

Variant 1 – Probability to be best – 91%

Summary of the Findings

The hypotheses set before the start of the tests for the main goals (simplifying the design and purchase process will improve the conversion) were confirmed – although the research in no case showed a 100% probability in favor of the “new version”. It is crucial to say that mobile devices did not take part in the test.

The content on the subpages can have a significant impact on the user’s experience. When high-quality content is exposed and well thought out, it can entice the user to place an order, and shouldn’t be treated only as a filler in an SEO strategy.

In addition to implementing the SEO strategy, the content should be educational, providing comprehensive answers to users’ questions. All businesses want their users to make a wise and mindful choice instead of making purchases on shady websites.

High converting users have a real impact on building trust in the internet domain, which translates into positions in SERPs.

Placing an order is only the first step to the final purchase. It would be necessary to perform similar tests and analyze the impact of user interfaces on order completion.

SEO enthusiast and digital marketing strategist. My expertise lies in optimizing websites for organic traffic growth and search engine visibility. I carry out, among others, SEO tests, keyword research and analytical activities using Google Analytics. Privately, he is a lover of mountains and bicycle trips.